Welcome!

Hi, I’m Janek Haberer, a final-year PhD student in Computer Science at Kiel University, Germany. My work focuses on the design of efficient deep learning methods in resource-constrained environments, with research areas spanning Edge AI, dynamic vision transformers, federated learning, and progressive image compression. I have 4 years of experience with 8 published papers in peer-reviewed conferences and journals: NeurIPS Main Conference, CVPR Main Conference, ICML Workshop, IEEE Access, MobiSys, CoNEXT Workshop, and EWSN Workshop. I am passionate about advancing machine learning technologies and collaborating on impactful projects.

Featured Publications

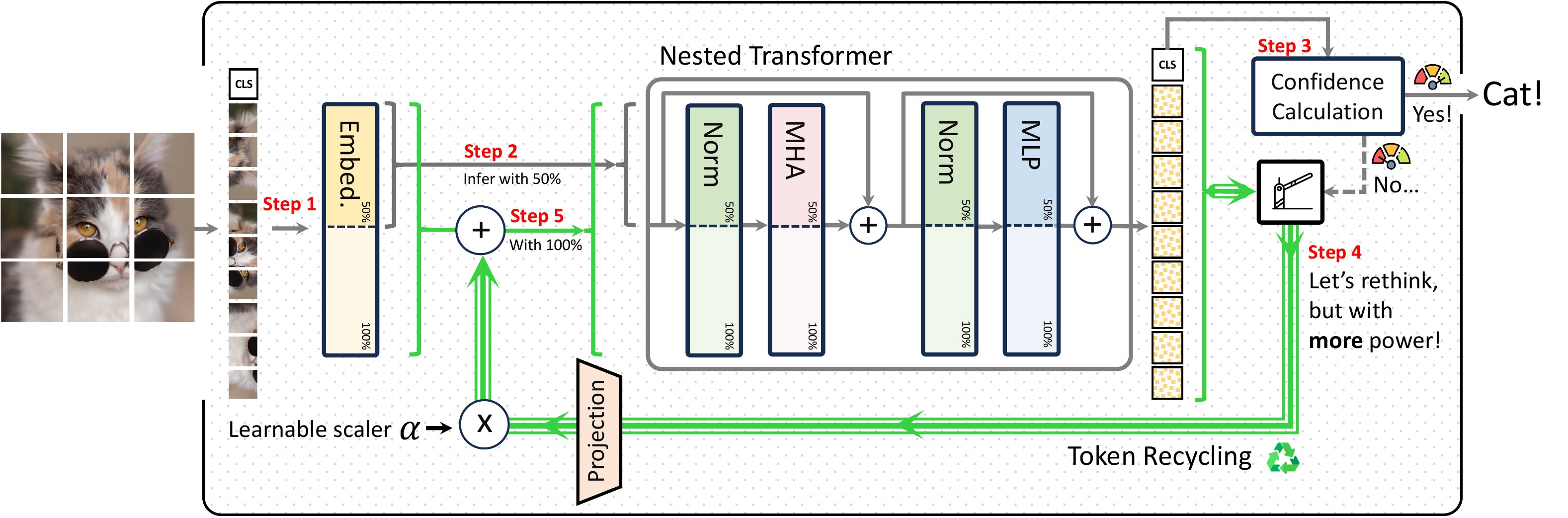

ThinkingViT: Matryoshka Thinking Vision Transformer for Elastic Inference

A. Hojjat, J. Haberer, S. Pirk, O. Landsiedel

CVPR'26: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2026

ThinkingViT is a nested Vision Transformer that dynamically adjusts inference computation based on input difficulty. It progressively activates attention heads across thinking stages, stopping early when prediction confidence is high.

[arXiv] | [GitHub]

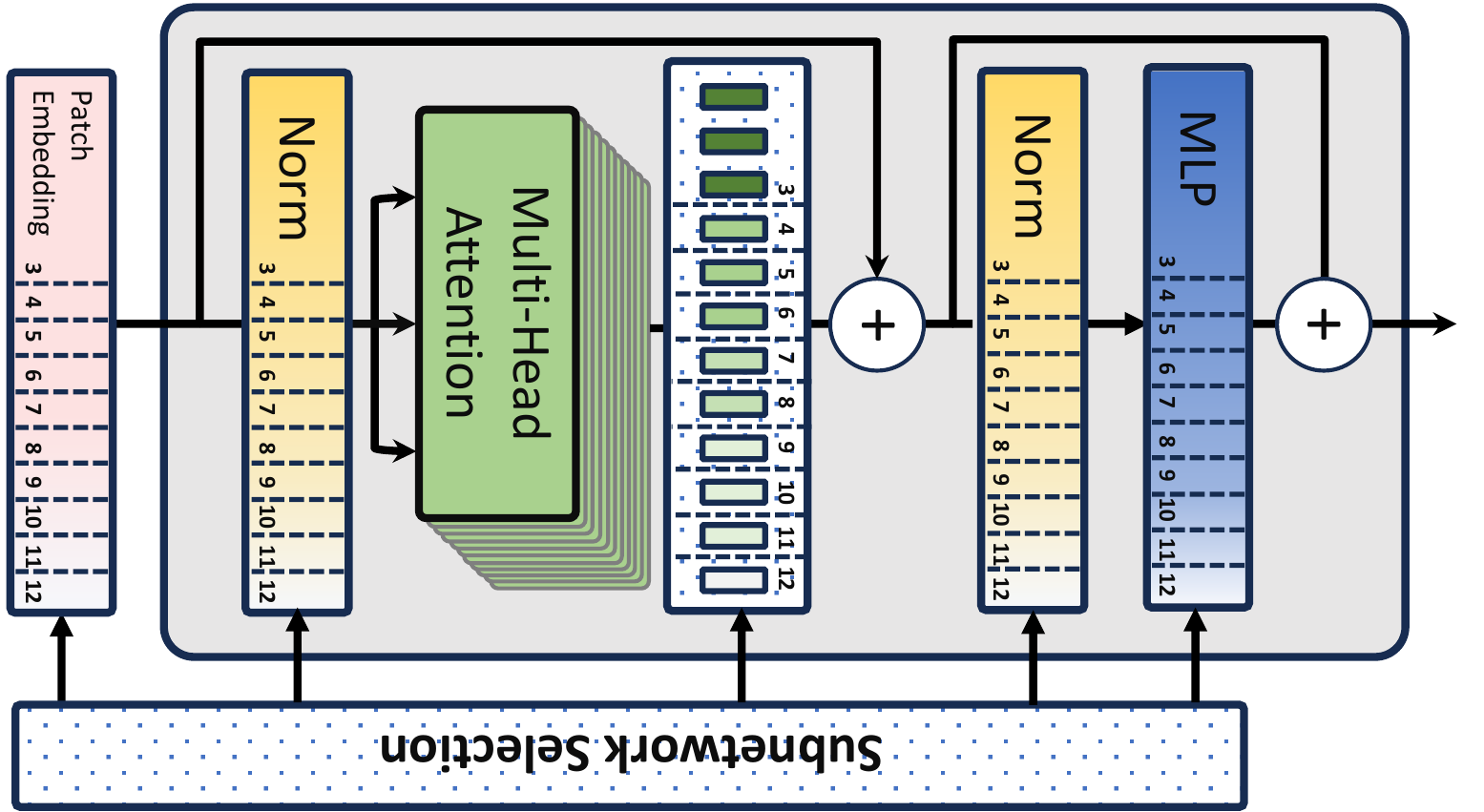

HydraViT: Stacking Heads for a Scalable ViT

J. Haberer, A. Hojjat, O. Landsiedel

NeurIPS'24: Advances in Neural Information Processing Systems 37, 2024

This work introduces HydraViT, a novel approach to scaling Vision Transformers (ViTs) by stacking multiple attention heads, enabling efficient and scalable deep learning for resource-constrained and dynamic environments.

[Published Version] | [OpenReview] | [arXiv] | [GitHub]